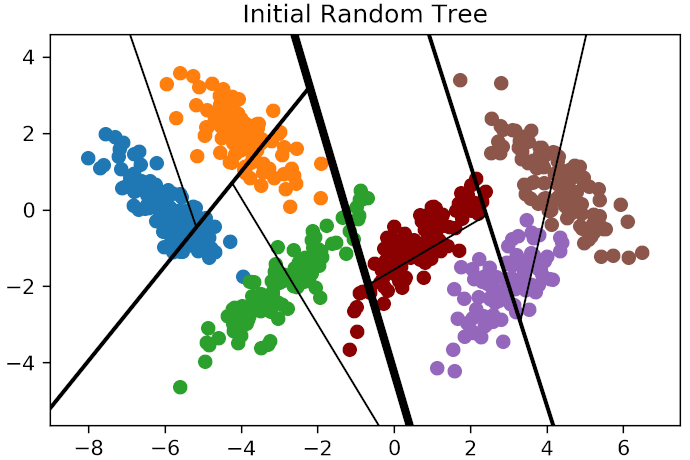

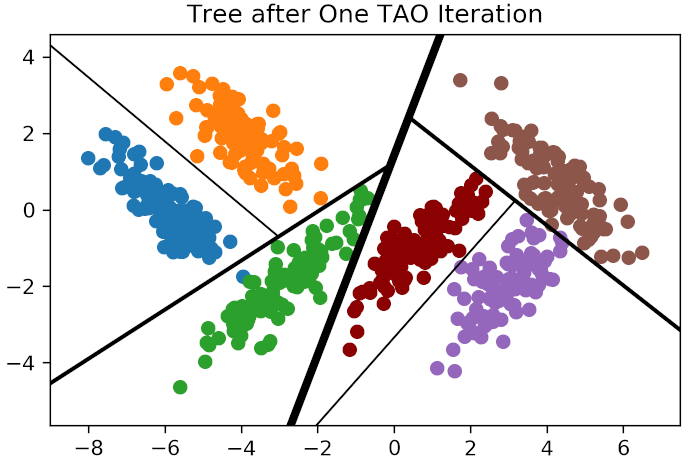

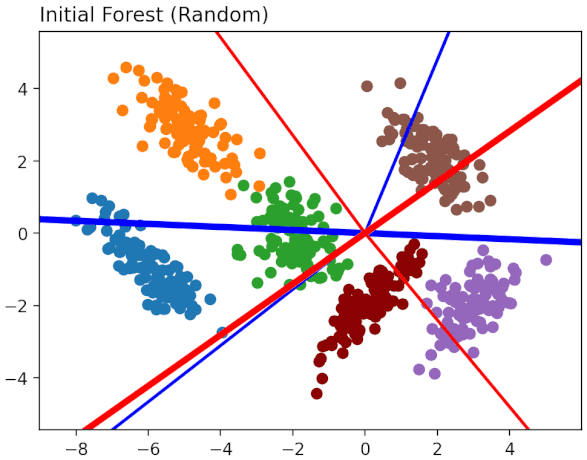

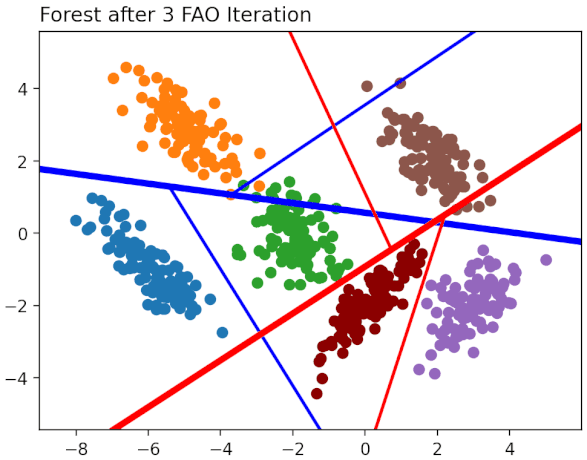

This page collects papers describing the "tree alternating optimisation" (TAO) algorithm, a new way to train many types of decision trees (axis-aligned, oblique, etc.) and tree-based models, so that they optimise a desired objective function and regularisation term or constraint. TAO can be used by itself to learn a single tree, or as the base learner of a forest (tree ensemble). It vastly outperforms the traditional, greedy recursive partitioning algorithms such as CART or C4.5 (in isolation or as part of a forest), and allows one to learn models that strike a good balance between accuracy and interpretability.

Coming soon.

Carreira-Perpiñán, M. Á. and Tavallali, P. (2018): Alternating optimization of decision trees, with application to learning sparse oblique trees. Advances in Neural Information Processing Systems 31 (NeurIPS 2018), pp. 1211-1221.

[external link] [paper preprint] [supplementary material] [poster]

Zharmagambetov, A. and Carreira-Perpiñán, M. Á. (2020): Smaller, more accurate regression forests using tree alternating optimization. 37th Int. Conf. Machine Learning (ICML 2020), pp. 11398-11408.

[external link] [paper preprint] [slides] [supplementary material] [video]

Carreira-Perpiñán, M. Á. and Zharmagambetov, A. (2020): Ensembles of bagged TAO trees consistently improve over random forests, AdaBoost and gradient boosting. ACM-IMS Foundations of Data Science Conf. (FODS 2020), pp. 35-46.

[external link] [paper preprint] [slides]

Gabidolla, M., Zharmagambetov, A. and Carreira-Perpiñán, M. Á. (2020): Boosted sparse oblique decision trees. Bay Area Machine Learning Symposium (BayLearn 2020).

[external link] [paper preprint] [video]

Carreira-Perpiñán, M. Á. and Hada, S. S. (2021): Counterfactual explanations for oblique decision trees: exact, efficient algorithms. 35th AAAI Conf. Artificial Intelligence (AAAI 2021), pp. 6903-6911.

[external link] [paper preprint] [slides] [poster] [Python implementation (coming soon)]

Longer version: Mar. 1, 2021, arXiv:2103.01096: [external link] [paper preprint]

Zharmagambetov, A. and Carreira-Perpiñán, M. Á. (2021): Learning a tree of neural nets. IEEE Int. Conf. on Acoustics, Speech and Signal Processing (ICASSP 2021), pp. 3140-3144.

[external link] [paper preprint] [slides] [© IEEE]

Zharmagambetov, A., Gabidolla, M. and Carreira-Perpiñán, M. Á. (2021): Improved boosted regression forests through non-greedy tree optimization. Int. Joint Conf. on Neural Networks (IJCNN 2021).

[external link] [paper preprint] [slides] [© IEEE]

Zharmagambetov, A., Hada, S. S., Gabidolla, M. and Carreira-Perpiñán, M. Á. (2021): Non-greedy algorithms for decision tree optimization: an experimental comparison. Int. Joint Conf. on Neural Networks (IJCNN 2021).

[external link] [paper preprint] [slides] [© IEEE]

Older version: An experimental comparison of old and new decision tree algorithms, Mar. 20, 2020, arXiv:1911.03054: [external link] [paper preprint]

Hada, S. S., Carreira-Perpiñán, M. Á. and Zharmagambetov, A. (2021): Understanding and manipulating neural net features using sparse oblique classification trees. IEEE Int. Conf. Image Processing (ICIP 2021), pp. 3707-3711.

[external link] [paper preprint] [slides] [poster] [© IEEE]

Zharmagambetov, A. and Carreira-Perpiñán, M. Á. (2021): A simple, effective way to improve neural net classification: ensembling unit activations with a sparse oblique decision tree. IEEE Int. Conf. Image Processing (ICIP 2021), pp. 369-373.

[external link] [paper preprint] [slides] [poster] [© IEEE]

Zharmagambetov, A., Gabidolla, M. and Carreira-Perpiñán, M. Á. (2021): Improved multiclass Adaboost for image classification: the role of tree optimization. IEEE Int. Conf. Image Processing (ICIP 2021), pp. 424-428.

[external link] [paper preprint] [slides] [poster] [© IEEE]

Hada, S. S. and Carreira-Perpiñán, M. Á. (2021): Exploring counterfactual explanations for classification and regression trees. Int. Workshop and Tutorial on eXplainable Knowledge Discovery in Data Mining (at ECML 2021).

[external link] [paper preprint] [slides]

Zharmagambetov, A., Gabidolla, M. and Carreira-Perpiñán, M. Á. (2021): Softmax Tree: an accurate, fast classifier when the number of classes is large. Conf. Empirical Methods in Natural Language Processing (EMNLP 2021), pp. 10730-10745.

[external link] [paper preprint] [slides] [poster]

Zharmagambetov, A. and Carreira-Perpiñán, M. Á. (2022): Learning interpretable, tree-based projection mappings for nonlinear embeddings. 25th Int. Conf. Artificial Intelligence and Statistics (AISTATS 2022), pp. 9550-9570.

[external link] [paper preprint] [slides] [poster] [animations] [video]

Hada, S. S. and Carreira-Perpiñán, M. Á. (2022): Interpretable image classification using sparse oblique decision trees. IEEE Int. Conf. on Acoustics, Speech and Signal Processing (ICASSP 2022), pp. 2759-2763.

[external link] [paper preprint] [slides] [poster] [© IEEE]

Gabidolla, M. and Carreira-Perpiñán, M. Á. (2022): Pushing the envelope of gradient boosting forests via globally-optimized oblique trees. IEEE Conf. Computer Vision and Pattern Recognition (CVPR 2022), pp. 285-294.

[external link] [paper preprint] [supplementary material] [slides] [poster] [© IEEE]

Gabidolla, M., Zharmagambetov, A. and Carreira-Perpiñán, M. Á. (2022): Improved multiclass AdaBoost using sparse oblique decision trees. Int. Joint Conf. on Neural Networks (IJCNN 2022).

[external link] [paper preprint] [slides] [© IEEE]

Hada, S. S. and Carreira-Perpiñán, M. Á. (2022): Sparse oblique decision trees: a tool to interpret natural language processing datasets. Int. Joint Conf. on Neural Networks (IJCNN 2022).

[external link] [paper preprint] [slides] [© IEEE]

Gabidolla, M. and Carreira-Perpiñán, M. Á. (2022): Optimal interpretable clustering using oblique decision trees. ACM SIGKDD Int. Conf. on Knowledge Discovery and Data Mining (KDD 2022), pp. 400-410.

[external link] [paper preprint] [slides] [poster]

Zharmagambetov, A. and Carreira-Perpiñán, M. Á. (2022): Semi-supervised learning with decision trees: graph Laplacian tree alternating optimization. Advances in Neural Information Processing Systems 35 (NeurIPS 2022), pp. 2392-2405.

[external link] [external link2] [paper preprint] [supplementary material] [poster] [video]

Extended abstract at the Bay Area Machine Learning Symposium (BayLearn 2022): [paper preprint] [poster]

Carreira-Perpiñán, M. Á. and Hada, S. S. (2023): Very fast, approximate counterfactual explanations for decision forests. 37th AAAI Conf. Artificial Intelligence (AAAI 2023), pp. 6935-6943.

[external link] [paper preprint] [slides] [slides] [Python implementation (coming soon)]

Longer version: Mar. 5, 2023, arXiv:2303.02883: [external link] [paper preprint]

Carreira-Perpiñán, M. Á., Gabidolla, M. and Zharmagambetov, A. (2023): Towards better decision forests: Forest Alternating Optimization. IEEE Conf. Computer Vision and Pattern Recognition (CVPR 2023), pp. 7589-7598.

[external link] [OpenReview] [paper preprint] [supplementary material] [poster] [slides] [© IEEE]

CVPR 2023 Art Gallery videos:

Hada, S. S., Carreira-Perpiñán, M. Á. and Zharmagambetov, A. (2024): Sparse oblique decision trees: a tool to understand and manipulate neural net features. Data Mining and Knowledge Discovery, 38:2863-2902.

[external link] [paper preprint] [media article]

Also as: Jan. 30, 2023, arXiv:2104.02922: [external link] [paper preprint]

Many of the figures in the publisher's version are badly messed up, with wrong labels. The arXiv paper has the correct figures.

Short version at the Workshop on Human-Interpretable AI (KDD 2024): [external link] [paper preprint] [poster]

Gazizov, K., Zharmagambetov, A. and Carreira-Perpiñán, M. Á. (2023): Pros and cons of soft vs hard decision trees. Bay Area Machine Learning Symposium (BayLearn 2023).

[external link] [paper preprint] [poster]

Kairgeldin, R., Gabidolla, M. and Carreira-Perpiñán, M. Á. (2024): Adaptive Softmax Trees for many-class classification. 40th Conf. Uncertainty in Artificial Intelligence (UAI 2024), pp. 1825-1841.

[external link] [external link2] [paper preprint] [poster]

Extended abstract at the Bay Area Machine Learning Symposium (BayLearn 2024): [external link] [paper preprint] [poster]

Gabidolla, M., Zharmagambetov, A. and Carreira-Perpiñán, M. Á. (2024): Beyond the ROC curve: classification trees using Cost-Optimal Curves, with application to imbalanced datasets. 37th Int. Conf. Machine Learning (ICML 2024), pp. 14343-14364.

[external link] [external link2] [paper preprint] [poster]

Extended abstract at the Bay Area Machine Learning Symposium (BayLearn 2023): [external link] [paper preprint] [poster]

Kairgeldin, R. and Carreira-Perpiñán, M. Á. (2024): Bivariate decision trees: smaller, interpretable, more accurate. ACM SIGKDD Int. Conf. on Knowledge Discovery and Data Mining (KDD 2024), pp. 1336-1347.

[external link] [paper preprint] [poster] [slides] [video]

Short version at the Workshop on Interpretable AI: Past, Present and Future (NeurIPS 2024): [external link] [paper preprint] [poster]

Extended abstract at the Bay Area Machine Learning Symposium (BayLearn 2023): [external link] [paper preprint] [poster]

Carreira-Perpiñán, M. Á. and Gazizov, K. (2024): The tree autoencoder model, with application to hierarchical data visualization. Advances in Neural Information Processing Systems 37 (NeurIPS 2024), pp. 32281-32306.

[external link] [external link2] [paper preprint] [poster] [slides] [video]

Extended abstract at the Bay Area Machine Learning Symposium (BayLearn 2024): [external link] [paper preprint] [poster]

Gabidolla, M. and Carreira-Perpiñán, M. Á. (2025): Generalized additive models via direct optimization of regularized decision stump forests. 38th Int. Conf. Machine Learning (ICML 2025), pp. 18047-18061.

[external link] [external link2] [paper preprint] [poster]

Kairgeldin, R. and Carreira-Perpiñán, M. Á. (2025): Neurosymbolic models based on hybrids of convolutional neural networks and decision trees. 19th Conf. Neurosymbolic Learning and Reasoning (NeSy 2025), pp. 796-813.

[external link] [external link2] [paper preprint] [slides] [poster]

Sundaram, J. P. S., Gabidolla, M., Carreira-Perpiñán, M. Á. and Cerpa. A. (2025): COMNETS: COst-sensitive decision trees approach to throughput optimization for Multi-radio IoT NETworkS. 30th IEEE Int. Conf. Emerging Technologies and Factory Automation (ETFA 2025), pp. 1-8.

[external link] [paper preprint] [poster] [© IEEE]

Longer version: Feb. 5, 2025, arXiv:2502.03677: [external link] [paper preprint]

Sundaram, J. P. S., Zharmagambetov, A., Gabidolla, M., Carreira-Perpiñán, M. Á. and Cerpa. A. (2025): MARS: Multi-radio Architecture with ML-powered Radio Selection for Mesoscale IoT Applications. 30th IEEE Int. Conf. Emerging Technologies and Factory Automation (ETFA 2025), pp. 1-8.

[external link] [paper preprint] [poster] [© IEEE]

Longer version: Sep. 26, 2023, arXiv:2409.18043: [external link] [paper preprint]

Kairgeldin, R. and Carreira-Perpiñán, M. Á. (2025): Fast image vector quantization using sparse oblique regression trees. IEEE Int. Conf. Image Processing (ICIP 2025), pp. 1402-1407.

[external link] [paper preprint] [slides] [video] [poster] [© IEEE]

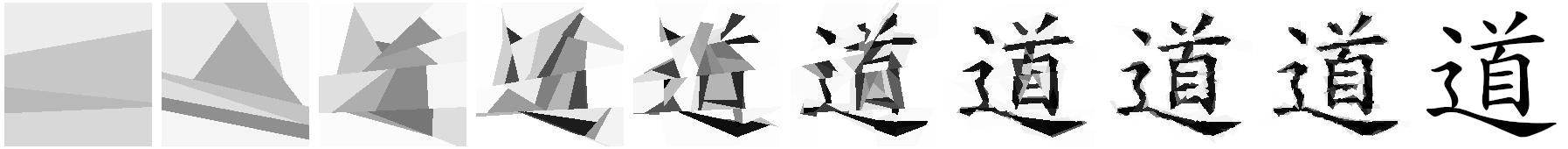

Chan, E., Gabidolla, M. and Carreira-Perpiñán, M. Á. (2025): Oblique decision trees as an image model for cubist image restyling. IEEE Int. Conf. Image Processing (ICIP 2025), pp. 1708-1713.

[external link] [paper preprint] [slides] [more slides] [video] [animations] [poster] [© IEEE]

Extended abstract "Cubist-style image effects with oblique decision trees" at the Bay Area Machine Learning Symposium (BayLearn 2024): [external link] [paper preprint] [poster] [artwork video] [news article]

Cubist-style image effects project page and art gallery at Edric Chan's web page

Gazizov, K. and Carreira-Perpiñán, M. Á. (2025): A faster training algorithm for regression trees with linear leaves, and an analysis of its complexity. Advances in Neural Information Processing Systems 38 (NeurIPS 2025), to appear.

[external link] [external link2] [paper preprint] [poster] [slides] [video]