The problem we consider in this work is sampling-based antijoin cardinality estimation. We explore how a set of prior (anti)join queries and their results can be used to devise better sampling-based antijoin cardinality estimators for query optimization. To this end, we introduce a novel sampling-based estimator for antijoin cardinality that provides the required generality and efficiency to be implemented in a query optimizer. The proposed estimator integrates query workload information using a Bayesian statistics framework and designs an efficient algorithm for sampling from a hypergeometric distribution. The use of prior workload information eliminates the time-consuming requirement of building a model of the data for each query, while the sampling algorithm makes the procedure efficient to be used in a query optimizer.

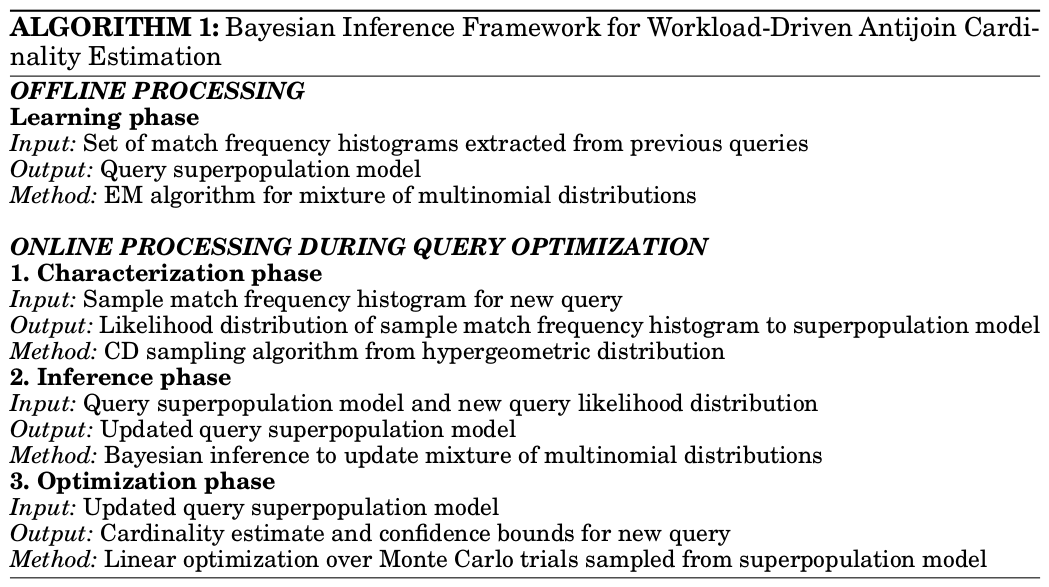

We introduce a four-phase Bayesian inference framework for antijoin cardinality estimation based on the following principles. First, we separate the model learning phase from the estimation process by defining a mixture model exclusively over past workload queries. These can be either join or antijoin predicates as long as they provide a match frequency histogram. Second, whereas model learning is an offline process, the learned model is not static. It is updated dynamically at runtime with data extracted from the current running query using a principled Bayesian statistics strategy. This results in improved estimation for similar queries executed in sequence. Third, we design an efficient algorithm for extracting samples without replacement from a population generated itself from a multinomial parametric distribution without even materializing the population.

The learning phase uses statistical methods to build a prior histogram model composed of a number of candidate match frequency histogram patterns. Each histogram pattern represents a class of (anti)join queries. A weight is assigned to each pattern indicating how likely a future histogram query matches that histogram pattern. Both the weights and the histogram patterns are learned offline from the historical query workload using an EM algorithm.

In the characterization phase, a likelihood distribution of the current sample histogram corresponding to each learned histogram pattern is generated. This is done using a novel Monte Carlo sampling algorithm that extracts a sample from the parametric representation without materializing the relations R and S. This is the main technical contribution we introduce in this work - a contribution that makes the proposed Bayesian inference framework suited for query optimization.

The inference phase uses the likelihood distribution computed in the characterization phase and the observed sample histogram to infer the posterior histogram probabilities. The prior weights of the histogram model are updated based upon the evidence seen in the sample histogram. The updated histogram model is then deemed as a superpopulation from which the query result hidden histogram is generated. It is important to notice that the characterization phase applies solely to the learned histogram patterns and not to the sample histogram observed at runtime.

The optimization phase generates a large number of histograms from the superpopulation computed in the inference phase. Each histogram is then used to instantiate a pair of outer and inner relations. For each relation pair, a sample pair is subsequently generated. As expected, this process is executed using the same Monte Carlo algorithm introduced in the characterization phase. The optimal estimator is computed such that it minimizes the sum-squared error (SSE) criterion across all the generated populations.

UC Merced | EECS | Home | Query Optimization